- Measuring AI Impact on Business Productivity

- What is measuring AI impact on productivity?

- Why it matters: The AI Measurement Gap is now a leadership problem.

- How do you measure AI impact on productivity? (A practical step-by-step framework)

- Why adoption metrics fall short: The gap between tool usage and business impact

- What to measure instead: Redefining productivity for the AI era

- How do you create an objective system of record for AI measurement?

- Measure AI impact with ActivTrak

- Interpreting results: Common AI measurement pitfalls and how to avoid them

- A 30-day plan to quantify AI's productivity impact with evidence

- Looking ahead: Measure the change, then act with confidence

Table of contents

Measuring AI Impact on Business Productivity

You invest in AI, but is it actually working? This guide shows you how to measure the impact.

Key takeaways:

- Measuring AI impact on productivity means connecting AI usage to changes in workflows, focus, capacity and outcomes — not just tracking adoption.

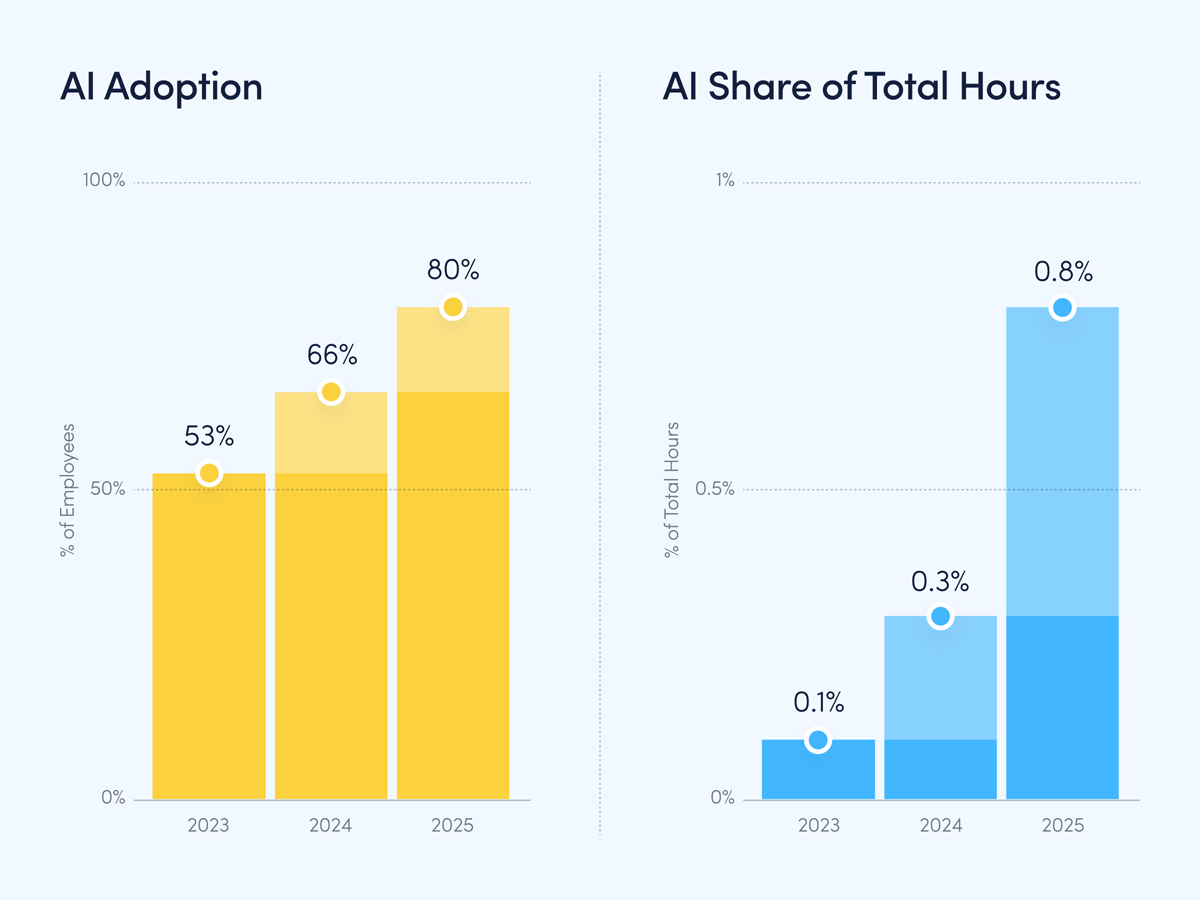

- In ActivTrak Productivity Lab data, AI adoption reached 80% and time spent in AI tools increased 8x. At the same time, focus efficiency declined to 60% and collaboration increased 34%.

- Together, these trends highlight an AI Measurement Gap: Many organizations can see AI usage but still struggle to quantify how (and where) that usage is affecting productivity and workforce capacity.

- A practical approach allows leaders to set a baseline, track workflow change and evaluate impact using a scorecard that includes productivity, performance, quality, focus and governance.

Every organization is making the same bet right now: AI will drive meaningful gains in productivity and performance. Yet 80% of companies aren’t actively measuring the ROI of AI. Adoption is easy to track. Impact is not.

AI tools are spreading fast and investment is accelerating. But the data many leaders rely on is fragmented, trapped in individual tools and disconnected from how work actually happens. Without an objective view across people, tools and AI agents, organizations struggle to answer a basic question: Is our AI investment actually delivering on the productivity promise?

Measuring AI impact on business productivity requires more than counting licenses, logins or time saved on isolated tasks. It requires understanding where AI is truly embedded in workflows and how it relates to capacity, performance, focus and outcomes.

What is measuring AI impact on productivity?

Measuring AI impact on productivity is the practice of tying AI usage to evidence-backed changes in how work gets done and what results are produced. It goes beyond tool metrics (seats, prompts, time in tool) to answer outcome questions. Is output faster? Is quality improving? Is capacity expanding? Is high-value focused work increasing, or do employees use the extra time for meetings and multitasking? These are the productivity questions effective AI measurement answers.

In other words, measuring AI impact is what separates activity from results. It’s the difference between seeing AI is in use and knowing if it improves the work that actually matters.

Why it matters: The AI Measurement Gap is now a leadership problem.

One reason this measurement problem persists is that the prevailing story about AI and modern work is incomplete. Many leaders assume AI makes the workday lighter by taking over repetitive tasks, reducing friction and increasing output while lowering effort. However, this is not what objective behavioral data shows.

In ActivTrak Productivity Lab research spanning more than 443 million hours of work activity across 1,111 organizations, a different picture emerges. The lab’s fifth annual State of the Workplace report examined behavioral data for 163,638 employees over three years (from January 1, 2023 to December 31, 2025). Their finding? Yes, the workday is shrinking. But work itself is denser, faster and more fragmented:

- AI adoption reached 80% of employees (up from 53% two years prior), and average time spent in AI tools increased 8x. But few leaders measure the impact.

- Productive hours increased 5% (to 6h 36m daily) even as the average workday shrank 2%.

- Collaboration surged 34% and multitasking rose 12%.

- Focus efficiency declined to 60% (a three-year low). The average focused session fell to 13 minutes 7 seconds, down 9% from 2023.

- Burnout risk dropped 22% (to 5%), while disengagement risk rose 23% (nearly 1 in 4 employees).

The takeaway is not “AI isn’t working.” It’s that AI use is often accompanied by a shift in how work happens.While output may rise, the dataset suggests it comes at the cost of focus and attention. Rather than serving as a substitute for existing work, AI adds another productivity layer.

This creates a leadership challenge. Executives aren’t just managing more work. They’re governing amplified work in an environment where focus, capacity and outcomes are all at stake. What many organizations still lack is visibility into how AI usage shows up inside workflows — and what that implies for key productivity metrics.

This gap between AI adoption and understanding its real impact is what the ActivTrak Productivity Lab calls the AI Measurement Gap. Closing it requires visibility. By understanding which employees use which tools, and to what effect, leaders can govern toward outcomes that actually matter.

How do you measure AI impact on productivity? (A practical step-by-step framework)

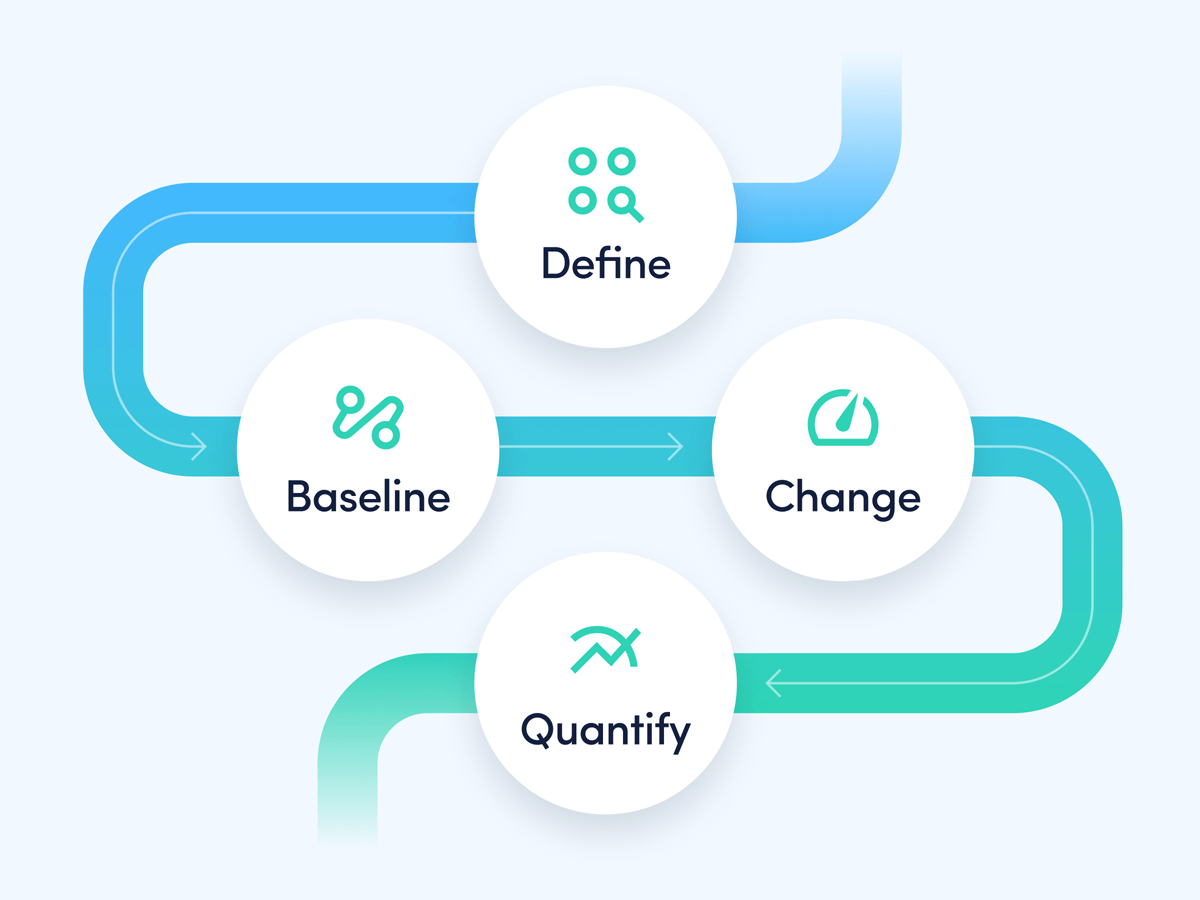

A credible measurement program connects adoption to workflow change to impact. Use the four-phase model below to build a repeatable system you can run across teams and processes.

- Define: Identify which workflow to measure alongside AI usage.

- Baseline: Establish current work patterns, productivity constraints, focus conditions and capacity bottlenecks before scaling AI.

- Change: Track where AI is used and how workflows shift as teams integrate it into work.

- Quantify: Determine if those changes are associated with improvements (or declines) in productivity, capacity, performance, focus, quality and compliance.

Step 1: Define the workflow you are measuring (not just the tool).

Start with a workflow that has measurable throughput and visible handoffs, such as customer support case resolution, sales proposal creation, marketing campaign production, billing reconciliation or HR recruiting. Measuring tool usage without defining the workflow creates noisy data and weak conclusions.

Step 2: Set a baseline that includes focus and collaboration.

Choose baseline data that goes beyond output and cycle time, and include conditions that drive sustainable performance. For example, Productivity Lab data shows focus efficiency often declines while collaboration time increases. Set baselines to capture focused work patterns, meeting and messaging load and multitasking signals alongside productivity measures.

Step 3: Measure adoption plus integration maturity.

Track who uses AI, how consistently they use it and where it appears in the workflow. Do employees use AI to draft, summarize, code, analyze, communicate with customers or support decisions? Adoption metrics are necessary but insufficient on their own — you also need to see whether teams progress from experimentation to durable workflow integration.

Step 4: Connect AI usage to impact with a scorecard.

Use a scorecard that tracks both business outcomes and employee behaviors. Without this balance, it’s easy to fall into common measurement traps — mistaking activity for real value, assuming saved time is used well or overlooking the extra effort spent on review and rework.

Why adoption metrics fall short: The gap between tool usage and business impact

Most AI rollouts start with an understandable focus: Get the tool in people’s hands and drive usage. This creates dashboards full of activity, from seats assigned and prompts submitted to copilots enabled and assistants invoked. While these indicators are useful, they stop short of what leaders actually need.

AI impact is a workflow-level question. One team may use AI frequently without improving throughput, quality or customer outcomes while another sees meaningful gains from infrequent use. The difference? How AI is integrated. Using AI at the right moments of the process — handoffs, reviews, decision points and repeatable administrative work — makes all the difference.

The practical challenge is attribution. When work is distributed across email, chat, documents, tickets, meetings, CRMs, design tools and AI assistants, leaders often lack a unified view. The key is to connect AI activity to work patterns and results. This allows leadership to break the unproductive cycle of high expectations, unclear measurement and decisions about governance and scale made without evidence.

What to measure instead: Redefining productivity for the AI era

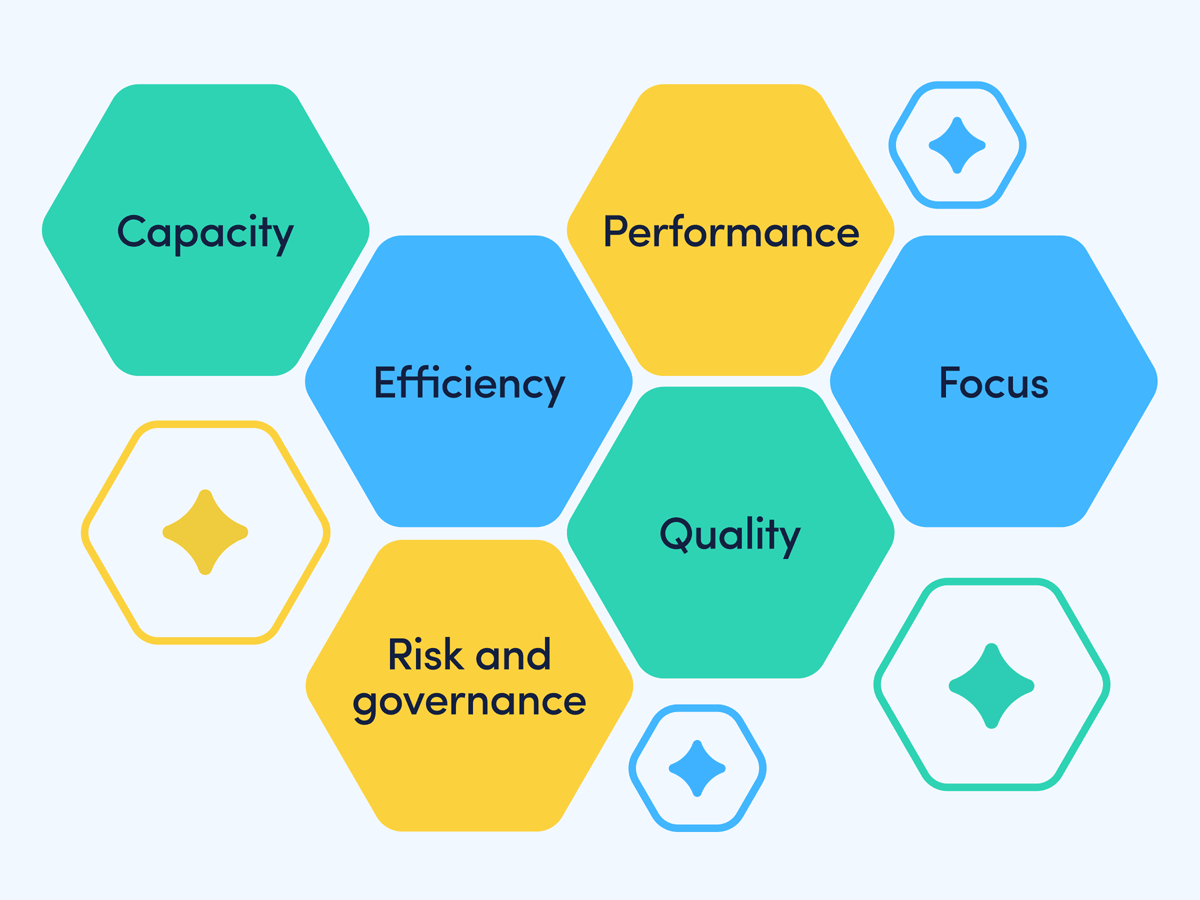

Speed matters, but faster is not the same as better. In an AI-enabled organization, productivity is best treated as a portfolio of measurable effects:

- Capacity: How much high-value work can a team complete with the same headcount?

- Efficiency: How much time is recovered from low-value effort and reallocated to high-value tasks?

- Quality: Are outputs more accurate, consistent and aligned to standards?

- Performance: Are tasks moving faster across real workflows (not just within single tools)?

- Focus: Are teams sustaining focused work, or is work more fragmented?

- Risk and governance: Is AI usage approved, compliant and aligned to policy?

This definition shifts measurement away from isolated time saved claims and toward a system-level view of how work changes when people and AI operate side by side.

Use the following categories to structure AI measurement in a way that holds up to executive scrutiny.

1) Adoption and workflow integration

Adoption is necessary but insufficient. The goal is to understand whether usage is sporadic or embedded into repeatable workflows. Answer:

- Who is using AI tools, and how consistently?

- Which teams show a progression from experimentation to integration?

- Where does AI appear in the workflow?

2) Productivity and efficiency signals

Efficiency is best measured as a shift in effort allocation. The key question is not “Did we save minutes?” but “What did we do with the recovered capacity?” Look for:

- Changes in time spent on low-value administrative work versus core value-creating work.

- Evidence of reduced context switching and fewer redundant steps.

- Changes in collaboration load (for example, fewer meetings required to align).

3) Focus and fragmentation

Because focus efficiency has fallen to 60% (a three-year low) while collaboration surged, leaders should treat focus as a managed resource rather than a default state. Measurement should capture whether work is more focused or more fragmented as AI and collaboration tools become more embedded. Keep track of:

- Focus efficiency trends and changes in focused session length.

- Meeting and messaging load changes alongside AI usage growth.

- Multitasking patterns in roles with heavy AI adoption.

4) Quality and rework

AI can accelerate first drafts but introduce errors, inconsistency or compliance issues. Measurement should capture whether AI reduces rework or simply shifts effort from drafting to correcting. Watch for:

- Revision patterns and rework signals in key workflows.

- Accuracy checks and adherence to standards.

- Downstream impacts, such as fewer escalations or corrections.

5) Capacity and performance outcomes

The strategic promise of AI is its ability to expand capacity, allowing teams to deliver more value with the same resources. This often shows up as increased throughput, faster cycle times or improved service levels, depending on the function. Look for:

- Throughput in repeatable workflows (such as cases handled, analyses completed or campaigns launched).

- Cycle-time improvements (like the time from request to completion).

- Performance consistency across teams and time periods.

6) Employee well-being

It’s important to interpret well-being signals within the context of capacity. Track whether capacity is freed through automation, and if improved work patterns are redeployed toward meaningful outcomes. Measure:

- Burnout risk and disengagement risk trends alongside AI usage patterns.

- Where underutilized capacity is concentrated by team and role.

- Whether workload density is increasing through collaboration and fragmentation rather than depth.

7) Governance, risk and sustainability

AI that improves short-term productivity but increases risk is not sustainable. Measurement must include whether tool usage is approved and policy-aligned, and whether people can maintain the new way of working without burnout. Track:

- Approved vs. unapproved AI usage patterns.

- Policy adherence and risk reduction signals.

- Change management indicators, including whether adoption is stable over time.

How do you create an objective system of record for AI measurement?

Work has fundamentally changed. It’s no longer people moving tasks through applications, but rather a system of people and AI shaping workflows in real time. Yet many measurement programs still focus on the wrong metrics.

Tool-centric measurement shows which AI assistant is used but misses the harder questions leaders must answer:

- Where is AI actually embedded in the workflow?

- How does AI shape where time and effort go?

- Which roles, teams and processes show measurable changes in capacity, performance, focus or quality?

- Where is AI creating risk through unapproved usage or sensitive data exposure?

To answer these questions, leaders need full visibility — and a way to connect behavioral patterns to business outcomes. This shift requires a system of record for how work happens across people, applications and AI.

Effective AI measurement starts with establishing an organization-wide baseline of adoption. Which AI tools are used, by whom, and how often? The key is to quantify usage patterns across teams and roles and distinguish early experimentation from durable workflow integration.

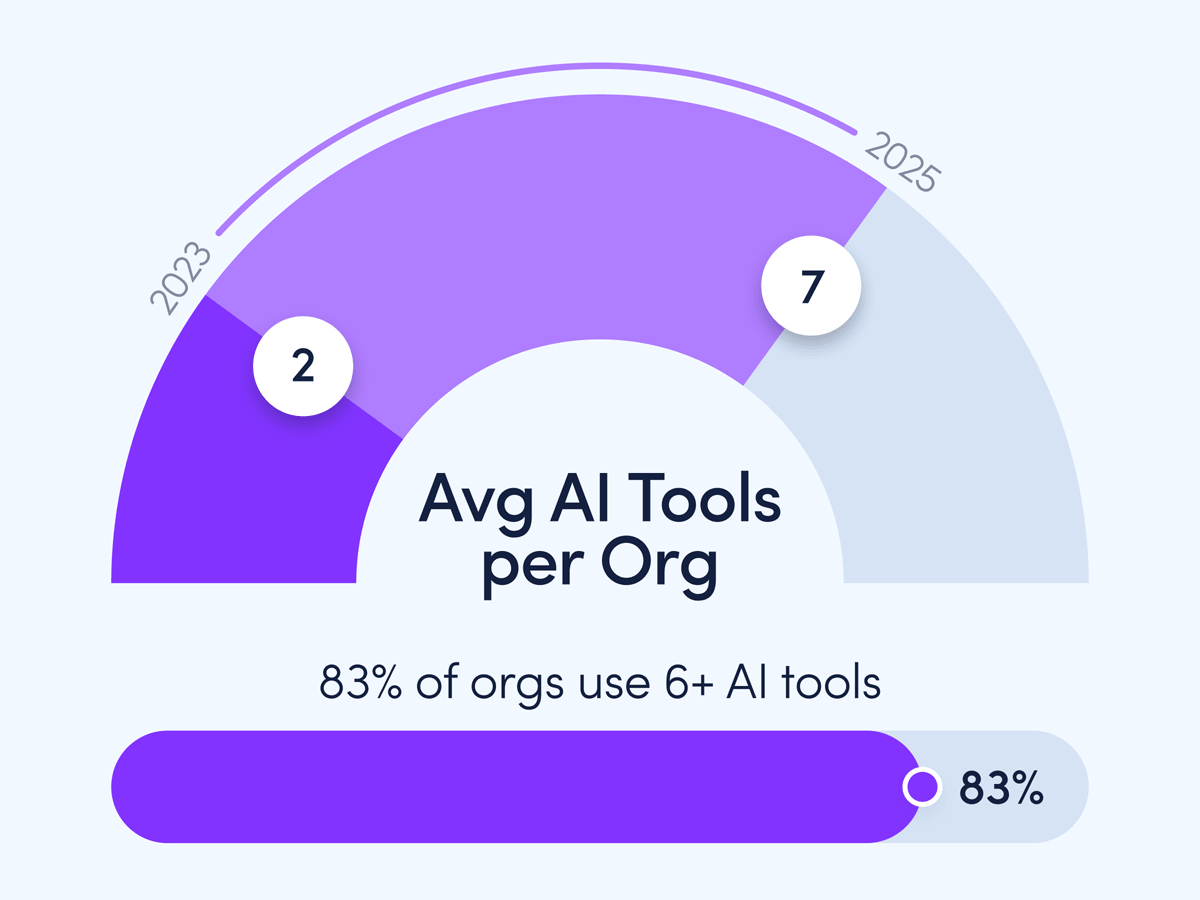

This matters because tool sprawl is now standard. The average organization uses 7 AI tools (up from 2 in 2023) and 83% use 6 or more. When employees work across multiple AI tools, oversight is harder to maintain without a unified view.

However, adoption is just the first step. Leaders must also analyze how AI usage aligns to measurable changes in work patterns. That includes signals tied to:

- Productivity: How is focused work, collaboration load and time spent in core applications changing?

- Capacity: Is there evidence teams can handle more high-value work with the same resources?

- Performance: Are there changes in the quality of work and how long it takes to complete?

In addition, organizations must ensure AI usage is approved and compliant. This matters because unapproved AI usage creates risk and makes measurement harder if leaders can’t see it.

The intensity question: finding the sweet spot

Usage volume is not inherently good or bad — it’s a variable to govern. Productivity Lab analysis identifies a “sweet spot” where employees spending 7-10% of total work hours in AI tools show the highest productivity (95%) of any usage tier (across 2023-2025 combined). Yet only 3% of users currently fall into that range, while 57% spend less than 1% of their total hours in AI tools.

This suggests a measurement and operating model opportunity: Move from tracking adoption rates to measuring AI effectiveness, then coaching and redesigning workflows toward effective, sustainable usage patterns.

Measure AI impact with ActivTrak

Without a system of record for how work happens, enterprises make multi-million dollar AI decisions on assumptions — not insight. ActivTrak transforms human and AI activity into insights leaders can use:

AI Insights connects AI activity to real business outcomes, showing leaders if tools free capacity or improve productivity.

AI Advisor provides data-driven recommendations for scenarios like backfills, hiring approvals and schedule adherence.

Learn more.

Interpreting results: Common AI measurement pitfalls and how to avoid them

Even with better visibility, AI measurement can go wrong in predictable ways. A strong program anticipates these pitfalls and designs around them.

The adoption equals impact assumption

High usage may reflect novelty, not value. Pair adoption metrics with workflow and outcome metrics — and track whether teams evolve from experimentation into repeatable integration.

The time saved disappears problem

Organizations frequently observe time saved without measurable business impact because recovered minutes are not intentionally reinvested. Explicitly track these shifts to see if higher-value work increased as low-value effort decreased.

The quality-control tax

If AI output requires extensive correction, the time saved in drafting is lost in review. Measurement should incorporate rework and revision signals to identify where AI is a net gain versus a net shift in effort.

Shadow AI and governance blind spots

Unapproved AI usage creates both risk and measurement error. If leaders don’t know where AI is used, they can’t accurately attribute changes or set appropriate guardrails.

A 30-day plan to quantify AI's productivity impact with evidence

Organizations do not need a months-long research project to begin measuring AI impact. A structured 30-day approach can establish baselines, identify early impact signals, and produce a defensible narrative for next-step investment and governance decisions.

- Week 1 – Baseline: Document current workflows, productivity constraints and capacity bottlenecks. Establish baseline usage of key applications and AI tools, and capture focus efficiency and collaboration load.

- Week 2 – Adoption and integration: Identify who uses AI, how consistently and where it shows up in critical workflows. Flag unapproved usage patterns that could create risk.

- Week 3 – Impact signals: Connect AI usage to measurable shifts in productivity, capacity, performance and focus. Assess rework and quality control effort and identify underutilized capacity.

- Week 4 – Decisions: Use evidence to determine what’s working, what’s not and where to invest next. Set governance guardrails and select workflows to scale, put operating mechanisms in place to redeploy capacity.

Looking ahead: Measure the change, then act with confidence

AI is already transforming how work gets done. The advantage will go to organizations that measure change and act with confidence. When leaders see how AI is embedded in workflows — and connect that usage to productivity, capacity, performance, focus and outcomes — they can scale what works, correct what doesn’t and govern responsibly.

ActivTrak provides the clarity to do both: An objective view across people, applications and AI, paired with the ability to turn measurement into action.

FAQs

What is the AI Measurement Gap?

The AI Measurement Gap is the gap between AI adoption and an organization’s ability to measure how AI changes work and affects productivity, focus and workforce capacity. Many companies can track AI usage but lack workflow-level visibility to connect usage to outcomes.

What should businesses measure to prove AI productivity impact?

At minimum: AI adoption and integration maturity, productivity and efficiency signals, quality and rework, capacity and performance outcomes, focus and fragmentation and governance/risk. A single KPI is rarely enough. A balanced scorecard is more defensible.

Does high AI usage automatically mean higher productivity?

No. High usage can reflect novelty or fragmented work rather than value. Productivity Lab analysis shows employees spending 7-10% of their total work hours in AI tools have the highest productivity (95%) of any usage tier (across 2023-2025 combined), but that relationship should be treated as descriptive of the dataset rather than a guarantee in every organization.

How can leaders measure whether AI is improving focus or hurting it?

Track focus efficiency trends, average focused session length and changes in meeting/messaging load alongside AI usage. If collaboration and multitasking rise while low-value work does not decline, focus can fall even as output rises.

Why is AI governance part of productivity measurement?

Unapproved or noncompliant AI usage creates risk and can also distort measurement. Without knowing which tools are used and where they appear in workflows, leaders cannot accurately attribute changes or scale responsibly.

How does ActivTrak help measure AI impact on productivity?

ActivTrak provides an objective view of how work happens across people, applications and AI. AI Insights helps organizations establish adoption baselines and analyze how AI usage aligns with productivity, capacity and performance signals, while AI Advisor helps leaders ask natural-language questions and receive answers grounded in activity data.

How long does it take to quantify AI productivity impact?

A structured 30-day approach is often enough to establish baselines, identify early impact signals and make evidence-backed decisions about governance and where to scale AI in critical workflows.